Giftpack Case Study Series #BFSI

Across global Banking, Financial Services, and Insurance (BFSI) organizations, incentives consistently improve engagement, response rates, and relationship momentum. Yet fewer than 30% of large institutions operate incentives under a formally governed model. The remainder rely on informal processes or avoid incentives altogether, citing compliance risk, audit exposure, and operational burden. Institutions that redesign incentives as a governed capability report measurable improvements in relationship velocity, operational efficiency, and audit readiness, without increasing regulatory risk.

1. The Structural Problem: Incentives Fail When They Are Unowned

In BFSI organizations, incentives are rarely questioned for their effectiveness. Internal pilots and market benchmarks consistently show that well-timed incentives increase response rates by 1.8×–2.6× in complex, trust-driven sales and relationship cycles.

The real issue is ownership.

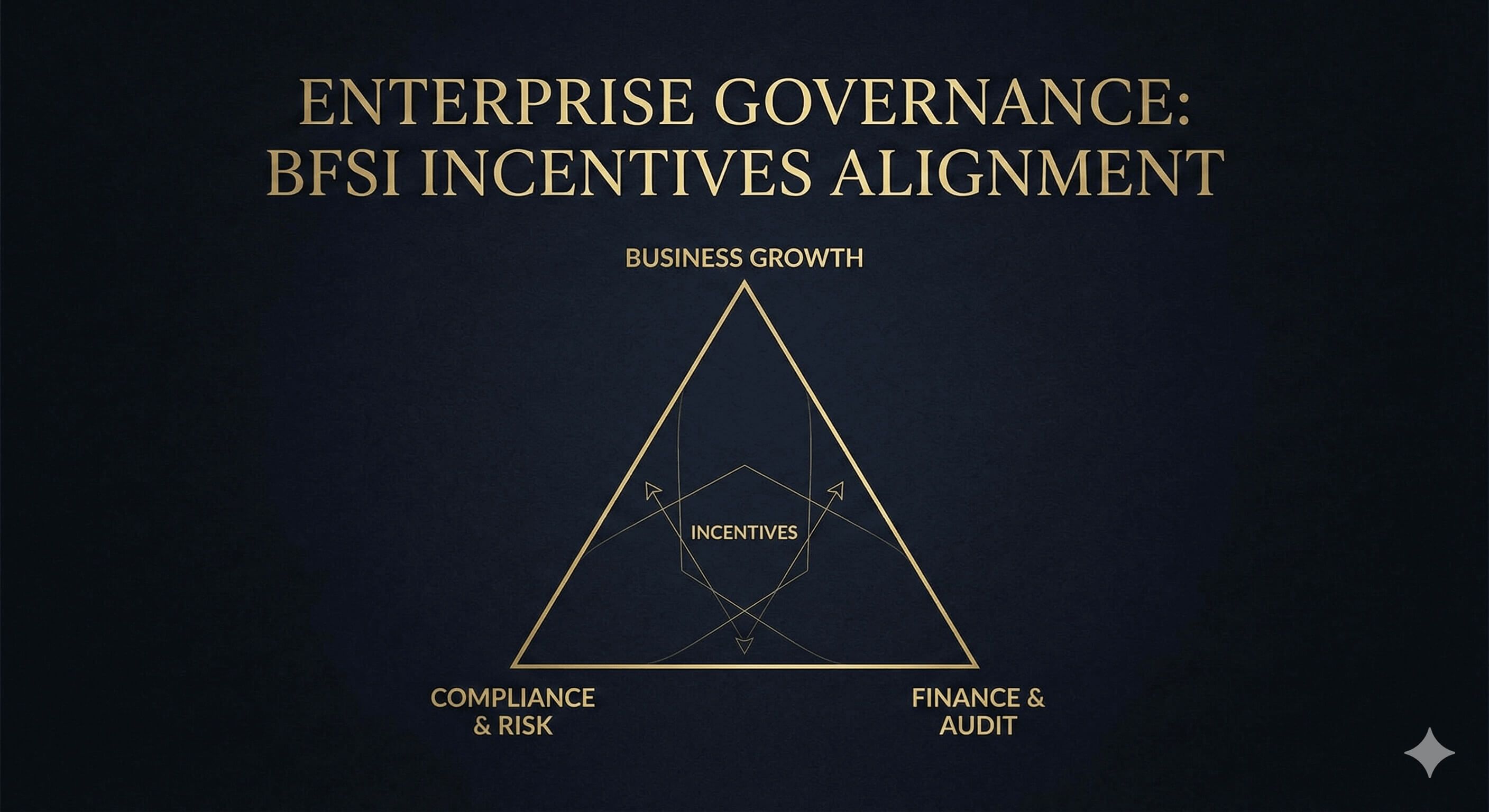

The moment an incentive is introduced, it intersects with three powerful functions:

- Business and revenue teams, accountable for growth, client engagement, and deal progression

- Compliance and risk teams, accountable for regulatory exposure, policy enforcement, and audit readiness

- Finance and procurement teams, accountable for budget control, reconciliation, and reporting integrity

Incentives touch all three. Yet in most institutions, they are formally owned by none.

This creates two common outcomes. Some organizations avoid incentives entirely, prioritizing safety over engagement. Others allow incentives to exist informally, executed through manual workarounds, disconnected vendors, and exception-based approvals.

Neither approach scales.

Internal reviews at large BFSI organizations show that once incentive usage crosses 50–100 events per quarter, manual processes begin to break down rapidly. Exception handling increases by 3–5×, regional policy divergence becomes visible, and audit preparation time expands disproportionately.

The core problem is not whether incentives work. It is whether they can exist as a governed institutional capability.

2. Reframing Incentives: From Rewards to Governed Relationship Signals

In BFSI, incentives cannot be treated as discretionary rewards. To be sustainable, they must be reframed as controlled relationship signals delivered under policy.

This reframing shifts the primary design question.

Instead of asking:

“What should we send?”

Leading institutions ask:

“Under what conditions are incentives allowed to exist?”

Institutions that adopt this framing report a significant reduction in internal resistance. In governance-first programs, compliance escalations related to incentives decline by 60–80%, largely because ambiguity is removed before execution begins.

This reframing is the dividing line between fragile incentive programs and scalable ones.

3. Where Incentives Create Measurable Value in BFSI

Governed incentives are not deployed broadly. They are applied selectively, at moments where relationship signals matter most and governance can be enforced.

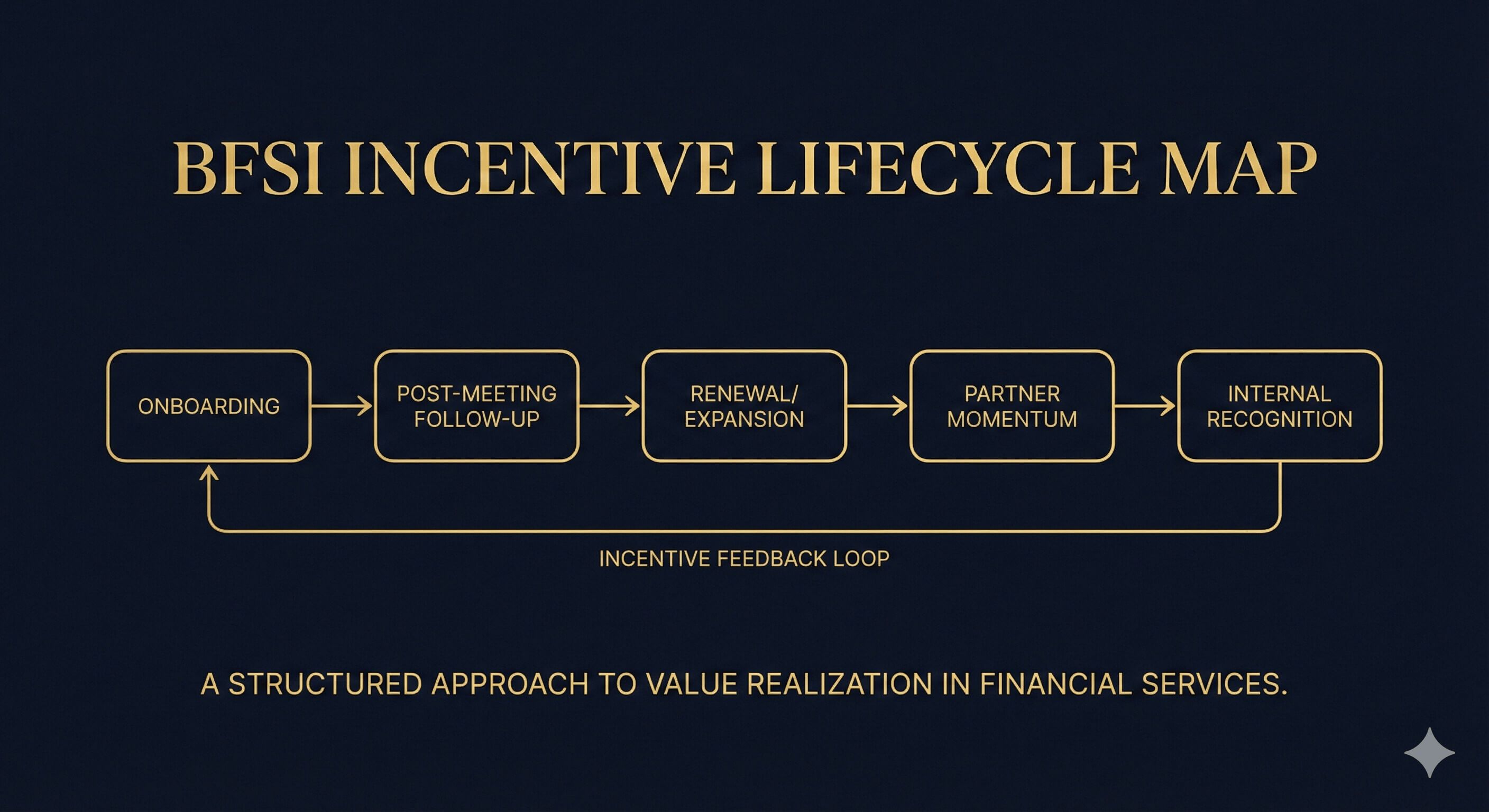

Client Onboarding Milestones

Data across BFSI onboarding programs shows that incentives deployed within the first 14–30 days reduce client follow-up latency by 30–45% and increase early engagement rates by approximately 20–35%.

Post-meeting Follow-ups in Complex Sales Cycles

In long-cycle enterprise sales, governed incentives increase post-meeting response confirmation by 1.7×–2.2×, particularly when delivered within 72 hours of the meeting.

Renewal and Expansion Touchpoints

Institutions using structured incentives during renewal windows report 10–18% improvements in renewal engagement depth, measured by response frequency and multi-stakeholder participation.

Partner and Channel Momentum

Partner programs using governed incentives experience fewer dormant periods, with time between joint actions reduced by 25–40%.

Internal Recognition for High-risk Operational Teams

Internal recognition programs show measurable impact on attrition risk. BFSI teams report 5–12% reductions in voluntary turnover among high-load operational and compliance functions when recognition is standardized and timely.

In each case, incentives function as precision signals, not broad motivational tools.

4. The Operating Model: How Incentives Scale in Regulated Environments

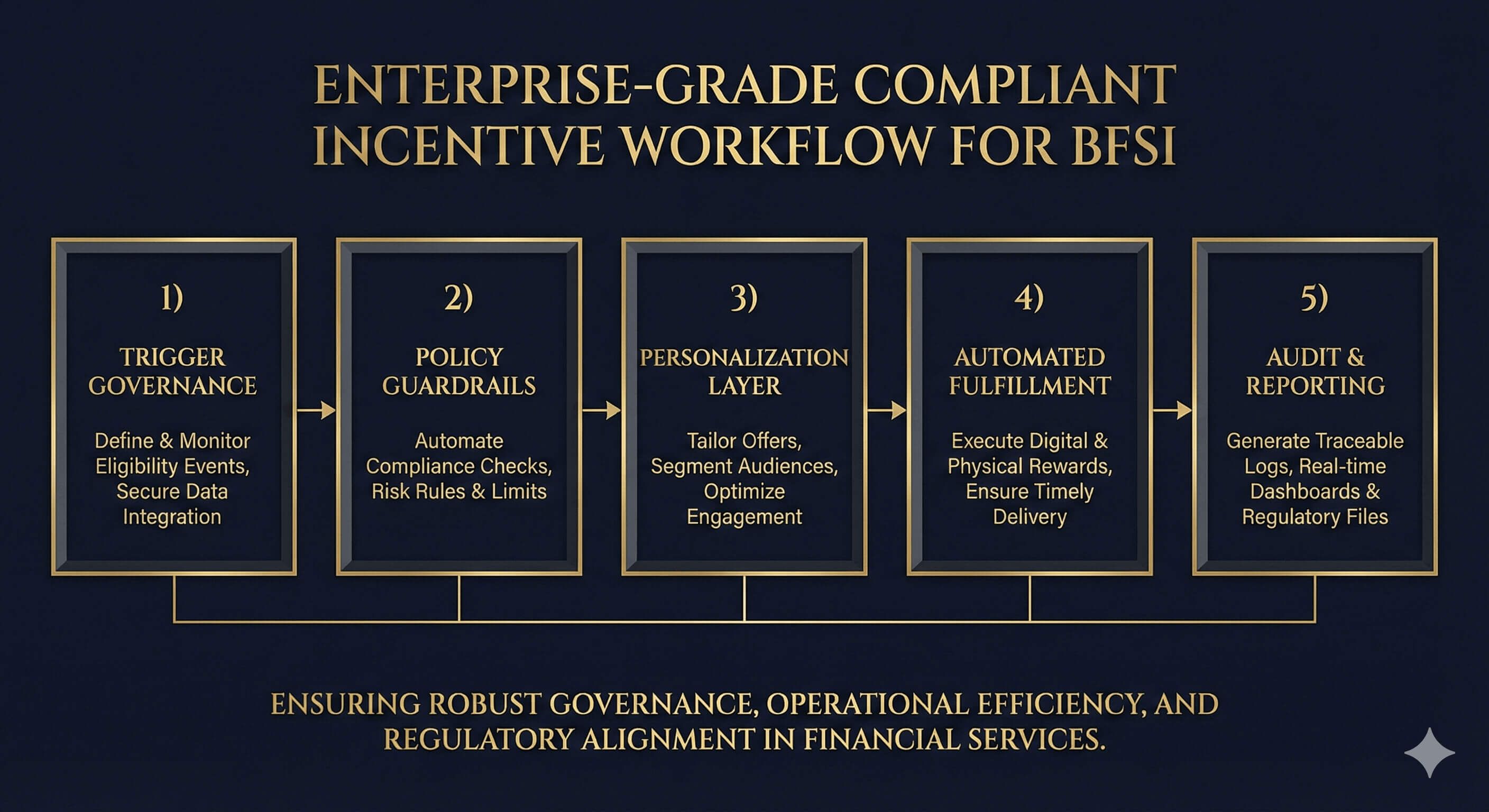

Scalable incentives in BFSI are built on governance, not creativity. Institutions that succeed typically design an operating model composed of five interlocking components.

4.1 Trigger Governance

Programs that enforce explicit triggers report 70–90% fewer discretionary exceptions compared to manager-led approvals.

4.2 Policy Guardrails

Clear value bands and permitted categories reduce compliance review cycles by 40–60%, while maintaining regional policy alignment.

4.3 A Controlled Personalization Layer

Choice-based personalization achieves comparable satisfaction scores to high-customization programs, while reducing compliance review complexity by over 50%.

4.4 Automated Fulfillment

Automation produces the largest operational gains. BFSI teams typically reduce manual handling time per incentive by 80–95%, freeing operations staff for higher-value work.

4.5 Audit and Reporting by Design

Programs with centralized reporting reduce audit preparation effort from weeks to days. In several institutions, incentive-related audit queries drop to near zero once reporting is standardized.

From Operating Model to Execution

Designing a governance-first incentive model is only the first step.

The more difficult challenge is making that model operable day to day, across teams, regions, and regulatory contexts.

In practice, many BFSI organizations discover that their biggest constraint is not policy alignment, but execution friction. Manual approvals, fragmented vendors, inconsistent fulfillment, and delayed reporting gradually reintroduce the very risks the model was designed to remove.

This is where incentive infrastructure becomes decisive.

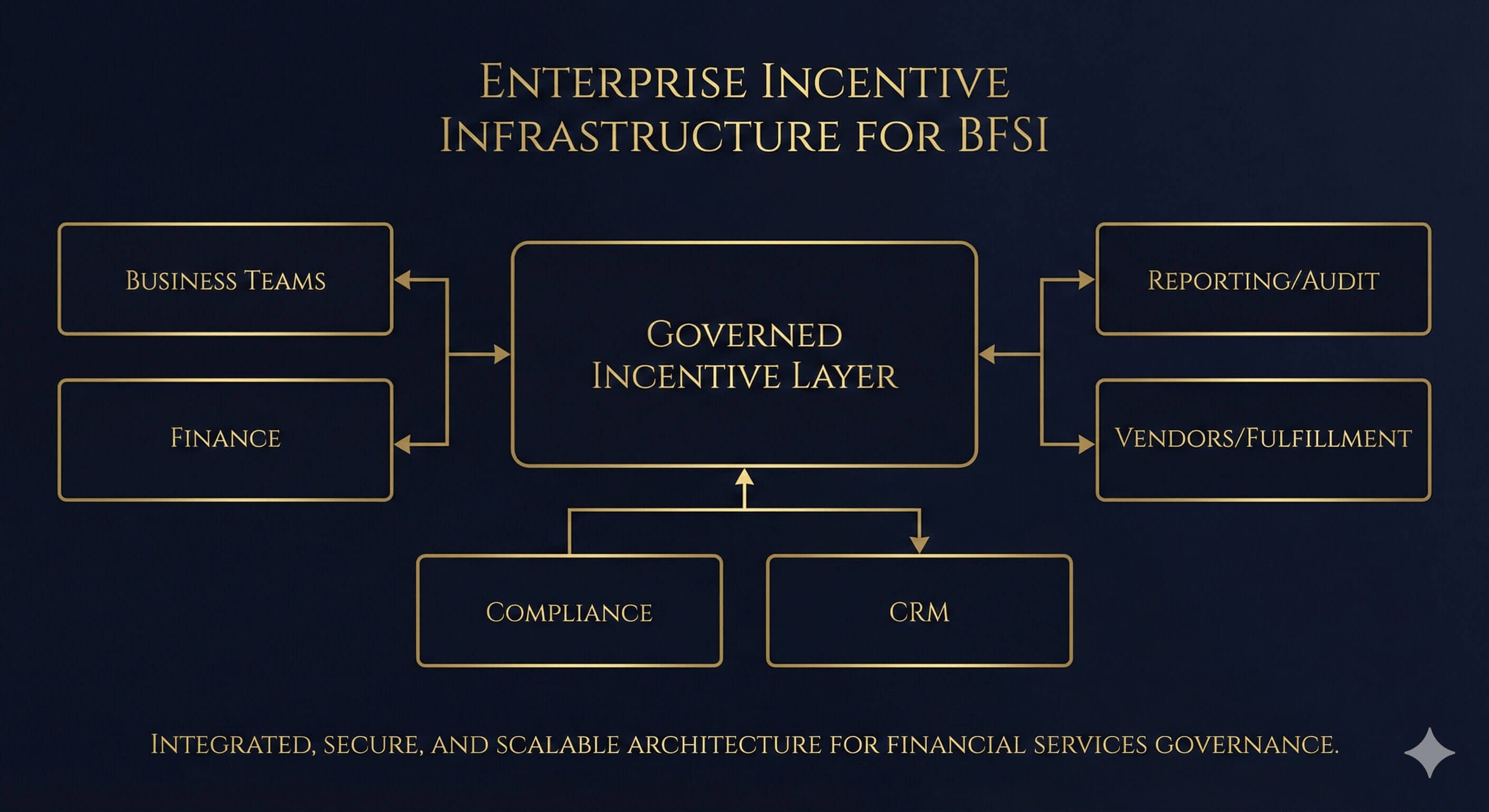

Giftpack operates as an execution layer that translates governance intent into consistent action. Rather than functioning as a gifting tool, it centralizes policy enforcement, automates fulfillment, and maintains audit-ready records across regions and teams.

In environments where incentives must be both effective and defensible, this separation matters:

governance defines what is allowed, infrastructure ensures it happens as designed.

The operating patterns described in this article reflect what becomes possible when incentives are treated as infrastructure rather than exceptions.

5. Measuring Success: The Metrics That Matter

Mature BFSI incentive programs track outcomes across three dimensions.

Relationship Velocity

Institutions report 25–50% reductions in time-to-response and measurable improvements in next-step confirmation rates.

Operational Control

Manual processing time per incentive typically drops from 15–30 minutes to under 2 minutes, producing immediate cost savings at scale.

Governance Strength

Exception rates fall below 5% in mature programs, with near-complete audit traceability across regions.

These metrics allow leadership teams to defend incentives as a managed capability rather than a discretionary expense.

6. Why Infrastructure Determines Outcomes

Across BFSI organizations, incentive programs that fail share a common trait: fragmented execution. Programs that succeed invest in infrastructure that enforces governance automatically.

Institutions operating under a centralized incentive infrastructure report:

- 90%+ reductions in manual operational effort

- Consistent policy enforcement across 30–40+ countries

- Full audit traceability without incremental headcount

This is where platforms like Giftpack operate: not as gifting tools, but as execution layers that make governed incentives practical at scale.

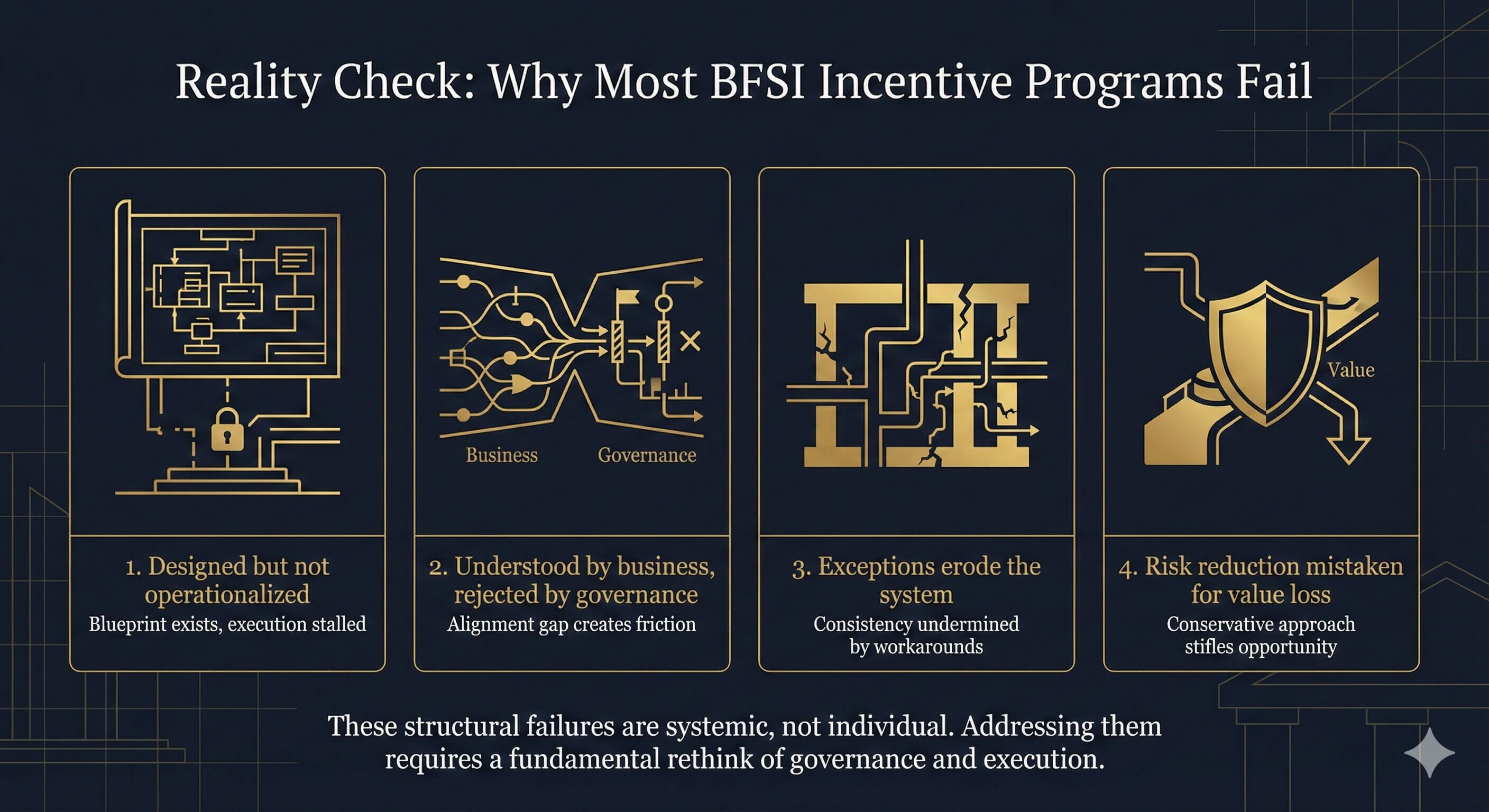

7. Why Most BFSI Incentive Programs Still Fail in Practice

Even when financial institutions agree on the value of Incentives, understand the governance principles, and invest time in policy design, most BFSI Incentive programs still fail to survive beyond early pilots.

In practice, failure rarely comes from a single design flaw.

It emerges from a series of predictable, structural breakdowns that surface once the program collides with day-to-day operational reality.

Based on advisory work across BFSI organizations, the following failure modes appear most frequently.

Failure Mode 1: The Program Is Designed, but Never Truly Operationalized

Many Incentive initiatives perform well in their first quarter.

This is usually because:

- Participation is limited

- Senior attention is high

- Exceptions can still be absorbed manually

Problems emerge once the program enters normal operating cadence.

Common symptoms include:

- Trigger conditions being “interpreted” rather than followed

- Manual coordination gradually replacing defined workflows

- Execution quality drifting across teams and regions

This is not a people problem.

It is a design problem.

In BFSI environments, any process that requires continuous judgment, reminders, or interpretation will eventually be deprioritized.

The uncomfortable reality is this:

Only systems that can be executed correctly without thinking tend to survive long term.

Failure Mode 2: The Program Is Understood by Business, but Not Internalized by Governance

Another common breakdown occurs when Incentives are embraced by commercial teams but viewed with unease by Legal, Compliance, Finance, or Audit.

This typically happens when:

- Program documentation emphasizes experience and success stories

- Governance boundaries are implied rather than explicit

- Reporting focuses on activity rather than control

In these cases, the program may be tolerated, but it is never truly endorsed.

Over time, this creates fragility:

- Increased scrutiny

- Slower approvals

- Eventual pressure to reduce or discontinue the program

In BFSI, a program that governance cannot clearly explain will never achieve institutional legitimacy.

Failure Mode 3: Exceptions Are Rationalized Until They Become the System

Every Incentive program encounters exceptions.

That is normal.

What creates risk is not the presence of exceptions, but the absence of a mechanism to absorb or eliminate them.

Common warning signs include:

- “This one is special” becoming a recurring justification

- Exceptions being approved but never reviewed

- Edge cases accumulating without policy feedback

Over time, this leads to:

- Boundary erosion

- Execution inconsistency

- Exponential audit exposure

Mature organizations ask a hard question continuously:

Is this exception meant to become policy, or should it be permanently closed?

Programs that cannot answer this eventually collapse under their own ambiguity.

Failure Mode 4: Risk Reduction Is Mistaken for Value Erosion

The most subtle failure mode is psychological.

As Incentives become:

- More structured

- More automated

- More predictable

Some stakeholders begin to question whether they are still “effective”.

The program feels less special. Less discretionary. Less dramatic.

This often leads to pressure to reintroduce flexibility, customization, or ad-hoc decisions—ironically re-injecting the very risks the governance model was designed to remove.

Advisory evidence consistently shows the opposite:

In BFSI, durable value comes from relationship rhythm and clarity, not surprise or discretion.

The programs that last are rarely the most creative.

They are the most stable.

What the Reality Check Is Really About

This section is not intended to discourage Incentive programs.

It exists to surface a core truth:

In BFSI, Incentive success is not determined by how clever the design is, but by how well it withstands daily operational friction.

If a program:

- Requires constant explanation

- Depends on exceptional judgment

- Breaks under scale or staff rotation

It will eventually be rejected by the organization.

A Final Reality Check

Incentives in BFSI do not fail because they are ineffective.

They fail because they are not institutionalized.

Programs that survive are not exciting. They are repeatable, defensible, and boring in the right ways. That is not a weakness.

It is the price of legitimacy in a regulated industry.

Conclusion: Incentives in BFSI Are a Governance Decision

In BFSI, incentives are not a marketing tactic. They are a governance decision.

When treated casually, they introduce risk and resistance. When designed as part of the institution’s operating model, they strengthen relationships, reduce friction, and support defensible growth.

The question for financial institutions is no longer whether incentives work.

It is whether incentives can be governed well enough to scale.

Incentives in BFSI succeed not because they are generous, but because they are designed to survive scrutiny.

A governed incentive program is not a marketing initiative. It is an operating decision.

Incentive Governance Self-Assessment for BFSI Leaders

The checklist below helps assess whether incentives in your organization operate as a governed capability or remain an informal workaround.

Governance & Ownership

- Incentives have a clearly defined executive owner

- Roles across Business, Compliance, and Finance are explicitly documented

- Incentive policies are reviewed on a regular cadence

- Exceptions are tracked and reviewed

⬜ Yes ⬜ Partially ⬜ No

Trigger Discipline

- Incentives are activated only by predefined business triggers

- Triggers are consistently applied across teams

- Discretionary incentives are rare and visible

- Teams understand when incentives are not allowed

⬜ Yes ⬜ Partially ⬜ No

Policy Guardrails

- Value bands are clearly defined

- Permitted categories are documented

- Jurisdiction-specific rules are enforced

- Cash and high-risk instruments are controlled or excluded

⬜ Yes ⬜ Partially ⬜ No

Operational Execution

- Incentives do not rely on spreadsheets or ad-hoc vendors

- Fulfillment does not require repeated manual coordination

- Cross-border delivery is consistent

- Operations teams are not acting as approval bottlenecks

⬜ Yes ⬜ Partially ⬜ No

Audit & Reporting Readiness

- Each incentive has a clear authorization record

- Policy justification can be reconstructed after the fact

- Reporting does not require manual consolidation

- Incentive data is visible at regional and global levels

⬜ Yes ⬜ Partially ⬜ No

Measurement & Outcomes

- Effectiveness is measured beyond redemption

- Response velocity and engagement quality are tracked

- Exception rates are monitored

- Programs can be defended during audit or budget review

⬜ Yes ⬜ Partially ⬜ No

Interpretation

- Mostly “Yes”: incentives operate as a governed capability

- Many “Partially”: incentives work but will strain under scale

- Several “No”: incentives remain fragile and politically sensitive

Data Sources, Methodology, and Interpretation Notes

The quantitative insights referenced in this article are drawn from a combination of platform-level observations, aggregated customer outcomes, and industry operating benchmarks.

Data Sources

Metrics are informed by:

- Aggregated performance data across Giftpack-supported incentive programs

- Before-and-after comparisons following incentive governance standardization

- Cross-industry benchmarks from BFSI organizations operating in regulated environments

- Qualitative insights from implementation and operational reviews

All data has been:

- Aggregated across multiple organizations

- Anonymized

- Normalized to reduce client-specific bias

No individual institution’s data is identifiable or disclosed.

How to Read the Numbers

Figures cited in this article represent:

- Observed ranges, not guarantees

- Directional impact, not fixed benchmarks

- Context-dependent outcomes, influenced by scale, geography, and governance maturity

Why These Metrics Matter in BFSI

Traditional incentive reporting often emphasizes spend and redemption.

In BFSI, these are insufficient.

The metrics highlighted here prioritize:

- Relationship velocity

- Operational resilience

- Governance durability

These dimensions align more closely with audit scrutiny, executive risk tolerance, and long-term scalability.

A Note on Industry Collaboration

The insights presented reflect ongoing collaboration between Giftpack and BFSI organizations navigating similar regulatory and operational constraints. They represent recurring patterns observed across implementations rather than isolated case outcomes.